In der offiziellen Rangliste von Top500.org, werden die besten Supercomputer der Welt aufgelistet. Wie Hersteller Intel stolz vermelden kann, befinden sich Intel Systeme momentan im Vormarsch und nähern sich langsam der Spitze des Feldes. Insgesamt werden nun bereits 56 Intel Großrechner unter den 500 zu finden sein, wobei es vor etwa drei Jahren gerade einmal 2 waren. Mehr in der Pressemitteilung (Englisch)...

"BALTIMORE, Nov. 18, 2002 - The TOP500 supercomputer rankings released today at the Supercomputing 2002 conference show a dramatic increase in the number of Intel-based systems being deployed in high-performance computing (HPC) or supercomputing areas. Traditionally made up almost exclusively of proprietary, RISC-based supercomputers, today´s TOP500 list includes 56 Intel-based systems, versus just two only three years ago.** The new TOP500 list includes two Intel-based clusters ranked in the top ten. Newly added Intel-based clusters on the TOP500 list include Lawrence Livermore National Labs at No. 5, the National Oceanic & Atmospheric Association (NOAA) Forecast System Laboratory at No. 8, Louisiana State University at No. 17 and Pacific Northwest National Laboratory at No. 61.

"Moore´s Law has pushed the performance of Intel-based computing platforms to the forefront of scientific and business innovation. These platforms, based on cost-effective Intel processors and commercial off the shelf networking technologies, have radically changed grid and HPC deployments," said Tom Gibbs, director of industry solutions at Intel. "By combining highly scalable performance and affordable prices, Intel is helping to hasten discoveries and innovation through worldwide grid computing initiatives in areas such as life sciences, bioinformatics, weather predictions, financial modeling and energy." Intel offers a competitive, top-to-bottom set of HPC solutions that includes Intel® Itanium® 2, Intel Xeon™ and Pentium® 4 processors; platform architectures; interconnect and

networking technology; software tools; and services - all targeted to reach across the range of government, industry and academic market segments, and software applications. Because of the potential for significant price savings offered by "clustering" - using networked servers or workstations as a single system to solve a large problem - the Aberdeen Group last year predicted Intel-based clusters would account for at least 80 percent of the high-performance computing market within three years.***

Likewise, research firm IDC projects the high-performance computing cluster market will climb to $1.6 billion in 2006, up from $494 million in 2001.****

Large-scale computational centers such as the National Center for Supercomputing Applications (NCSA), the Cornell Theory Center and the European Organization for Nuclear Research in Geneva (CERN) are leading the shift away from RISC systems to commercial, off-the-shelf applications running on industry standards-based platforms and interconnects. In both cases, the organizations are deploying Itanium 2-based systems.

"Clusters based on commercial, off-the-shelf components such as Intel´s Itanium 2 and Pentium 4 processors offer the scalability, performance and reliability that our users need to solve the large, complex problems of science," said NCSA director Dan Reed, the chief architect of the TeraGrid project. "With TeraGrid funding, we will soon complete deployment of 10 teraflops of Intel-based Linux clusters at NCSA. This will complement two teraflops of Intel-based Linux clusters already in production at NCSA."

Grid Computing: the Next Wave of HPC

In the past, supercomputers have been expensive and based on single-sourced monolithic supercomputers. Access was restrictive, making it difficult for these systems to be deployed outside of specialized supercomputing centers. In the 1990s, RISC-based HPC solutions delivered better price/performance than specialized supercomputers, but were based on single-source proprietary components and were still costly.

By the mid 1990s, the performance of Intel processors reached the point where real supercomputing could be done with clustered Intel-based platforms. Today, Intel processors are powering some of the fastest computers in the world. More importantly, the price points enabled by scalable Intel-based platforms have expanded HPC beyond the confines of supercomputer centers, making these systems available to a broad community of users in mainstream industries, as well as to thousands of smaller but influential scientific, research and academic organizations. Intel-based solutions are rapidly becoming the platform of choice because of their superior price/performance and scalability, support for open-source development, and extended, multi-vendor environment. Grid computing takes the HPC philosophy and extends it by linking desktops, clusters and large symmetric multiprocessing (SMP) systems across multiple geographic locations - creating a single, virtual computing resource. This enables the integrated, collaborative use of high-end computing systems, networks, data archives and scientific instruments that are operated and accessed by multiple organizations.

Intel is already enabling grid deployments around the world, including the Singapore BioIT Grid, the National Science Foundation´s Distributed Terascale Facility (TeraGrid) and The Search for a Cancer Cure public grid with the National Foundation for Cancer Research and Oxford University.

About the TOP500

The semi-annual TOP500 supercomputers tracks the highest-performing systems and is compiled by Hans Meuer of the University of Mannheim, Erich Strohmaier and Horst Simon of the U.S. Department of Energy´s National Energy Research Scientific Computing Center, and Jack Dongarra of the University of Tennessee. For more information, visit www.top500.org...."

Stellen Sie sich vor, Sie arbeiten an einem aufwendigen 3D-Modell oder bearbeiten 4K-Videos - und Ihr Computer hängt sich auf...

Online-Zahlungsmethoden sind längst kein bloßes Mittel zum Zweck mehr ‒ sie sind Teil unserer digitalen Identität. Für viele, die sich...

Speicherspezialist Seagate gibt heute die weltweite Verfügbarkeit der neuen Exos M und IronWolf Pro Festplatten mit bis zu 30 TB...

Philips präsentiert mit dem neuen 68,58 cm (27") Evnia 27M2N3800A einen Premium-Gaming-Monitor der nächsten Generation. Das Besondere an diesem Modell:...

Desktop-PCs bleiben daheim, das Handy kann einen vollwertigen Computer nicht immer ersetzen. Es hat gute Gründe, warum du dein Laptop...

Seagate stellt die Exos M und IronWolf Pro Festplatten mit 30 TB vor. Die neuen Laufwerke basieren auf der HAMR-Technologie und sind für datenintensive Workloads bestimmt. Wir haben beide Modelle vorab zum Launch getestet.

Mit der T710 SSD stellt Crucial seine 3. Generation PCI Express 5.0 SSDs vor. Die neuen Drives bieten bis zu 14.900 MB/s bei sequentiellen Zugriffen und sind ab sofort erhältlich. Wir haben das 2-TB-Modell ausgiebig getestet.

Mit der XLR8 CS3150 bietet PNY eine exklusive Gen5-SSD für Gamer an. Die Serie kommt mit vormontierter aktiver Kühlung sowie integrierter RGB-Beleuchtung. Wir haben das 1-TB-Modell ausgiebig getestet.

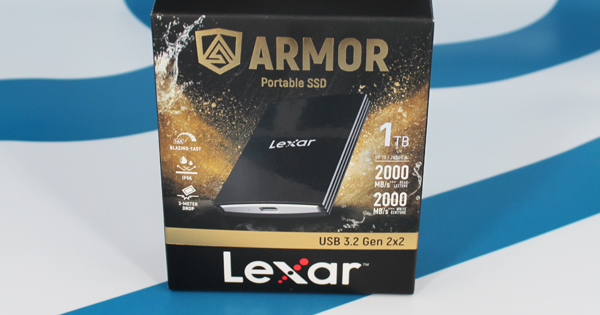

Die Armor 700 Portable SSD von Lexar ist gemäß Schutzart IP66 sowohl staub- als auch wasserdicht und damit perfekt für den Outdoor-Einsatz geeignet. Mehr dazu in unserem Test des 1-TB-Exemplars.